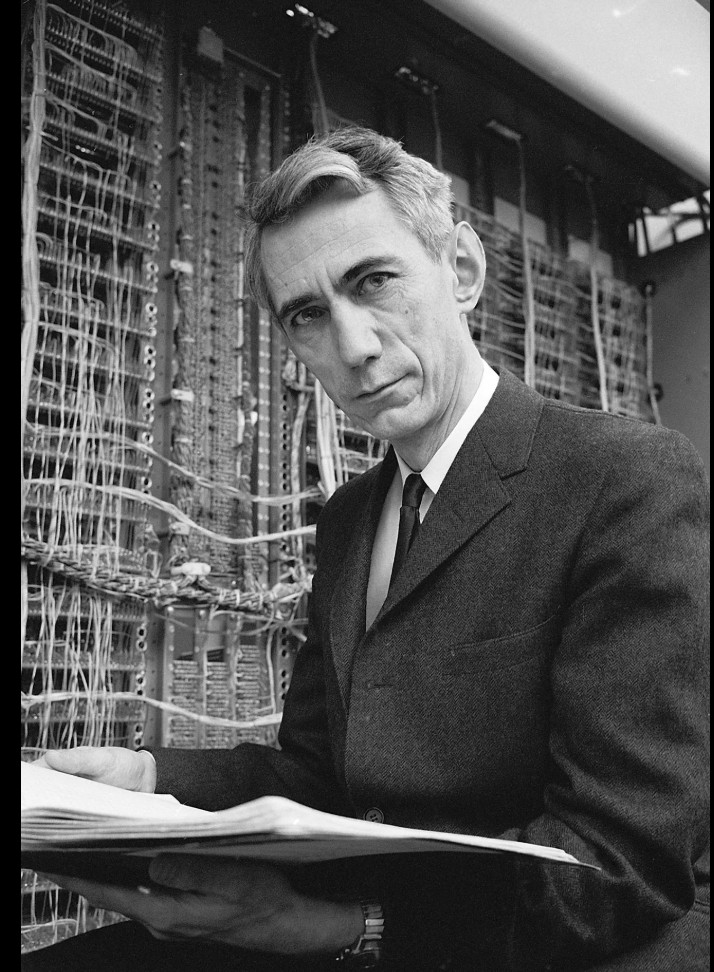

Claude Shannon (1916-2001)

If you’ve ever sent an email, made a phone call, or streamed a movie, you’ve touched the work of Claude Shannon. Whether you knew it or not, he’s the reason any of that stuff works.

Shannon was an MIT trained mathematician and engineer who spent most of his career at Bell Labs, that legendary incubator of 20th century innovation. In 1948, he published a paper titled “A Mathematical Theory of Communication” that essentially invented the field of information theory overnight. It’s one of those rare works that creates an entirely new discipline from scratch.

What Shannon figured out was how to measure information. Not its meaning. He wisely stepped around that philosophical landmine. Instead, he focused on its quantity. He introduced the “bit” (binary digit) as the fundamental unit of information, and showed how any message could be broken down into bits and transmitted reliably over noisy channels. This was the mathematical breakthrough that made the entire digital age possible!

Here’s what I find most endearing about Shannon. He was a tinkerer, a gadget builder, a man who rode unicycles through the halls of Bell Labs while juggling. He built flame throwing trumpets, rocket powered frisbees, and a chess playing machine long before anyone called it AI. He seemed to understand that play and deep intellectual insight are not opposites – they’re partners.

And here’s what I find maddening about Shannon. You see, he called his work “information theory,” but it wasn’t really a theory of information at all. Not in the deeper sense. Instead, his was a theory of communication, a theory of signals and channels and noise. But, by using the word “information,” he inadvertently sent generations of researchers down a variety of confusing rabbit holes. Philosophers, biologists, physicists all are forced to untangle whether their theories refer to information in Shannon’s technical sense (a measure of uncertainty), or in the richer, philosophical sense (meaning, pattern, structure).

Of course, Shannon knew exactly what he was doing. He famously said he was avoiding the messy problem of “meaning” entirely! But the name, “information theory,” stuck, and the confusion persists. I suppose that’s both a benefit and a pitfall of being a genius sometimes. You get to name a completely new field, even if the name isn’t quite right.

Shannon gave us the tools to think about information mathematically, but he also showed us that the deepest insights often come from people who refuse to take themselves too seriously.

Essential reading: “A Mathematical Theory of Communication” (1948). Though I’ll warn you. The math is thick. For the rest of us, James Gleick’s “The Information” tells Shannon’s story beautifully.